copy.fail: From kernel CVE to Kubernetes Container Escape

My previous posts looked at what happens when a shared object — the LLM KV cache — has no per-tenant namespace: co-tenants can read each other’s data and starve each other’s resources. Coincidentally, the newly dropped CVE-2026-31431 (copy.fail) is the same pattern at a lower layer. The shared object is the Linux page cache (yup, a cache again!). The co-tenants are Kubernetes pods. The isolation boundary that does not exist is the host kernel.

The xint.io team published a writeup of the LPE vector; as of time of this post their container-escape follow-up is not yet out. This post covers a concrete container escape chain against Talos Linux and what the vulnerability class says about shared-kernel container security.

TL;DR

copy.fail is a local privilege escalation — but the xint.io authors note it can also be used to escape Kubernetes pods. In that scenario, the LPE itself is not the point: the attacker is already running in a pod and doesn’t need to escalate within it. Instead, copy.fail is used as a page cache corruption primitive. Containers on the same node share the host kernel, and through it, the page cache: the kernel’s in-memory representation of file contents. If two containers share an image layer, they share page-cache pages for every file in it. Corrupt the right file in a layer shared with a privileged container, and you get code execution in that container’s context — no LPE in the attacker’s pod required.

Talos Linux — a minimal, immutable OS for Kubernetes with no shell, no SSH, and a read-only root filesystem — is a concrete example where this plays out. On Talos worker nodes, kube-proxy and user workload containers share an overlayfs layer containing /usr/sbin/nft. kube-proxy calls nft as root every few seconds to reconcile nftables rules. An attacker in an unprivileged pod overwrites nft’s page-cache pages with shellcode and waits. The next reconciliation tick executes it as root, with access to the host filesystem.

At the end I discuss how microVM and sandboxed runtime architectures address this class of vulnerability by design.

1. Background: the copy.fail primitive

The full mechanics are covered in the xint.io writeup, and IMO the PoC exploit is very elegant. The short version:

Linux exposes kernel crypto to unprivileged userspace via AF_ALG sockets. A 2017 “in-place optimization” allowed the AEAD encryption engine to use the destination buffer as scratch space during intermediate steps — a reasonable choice, unless that destination buffer is backed by page cache pages.

The exploit feeds file data into an encryption operation using splice(), which passes page-cache pages directly rather than copying them. The AEAD engine writes a small amount of attacker-controlled data into those pages as scratch. The file on disk is untouched. The kernel’s in-memory view of it changes. No filesystem permissions are checked; no root is required; any process that can open an AF_ALG socket and read a file can do this.

2. Why the page cache crosses container boundaries

The Linux page cache is the kernel’s in-memory cache of file contents. When a process reads a file, the kernel loads it into the page cache and serves future reads from there. Writes to the page cache via copy.fail are immediately visible to any other process reading the same file — regardless of which container that process is in.

Linux namespaces isolate process trees, network interfaces, mount points, and user IDs. The page cache has no namespace. There is no per-container view of cached file contents. Every container on a node shares one page cache, managed by one kernel.

Container images are composed of layers. containerd stores each unique layer once on disk; multiple containers that share a base image mount the same underlying inodes through overlayfs. Shared inodes mean shared page cache pages. If two containers on the same node have pulled images that share a layer, any file in that layer lives in memory exactly once, visible to both.

3. The Talos escape chain

Talos Linux is built around the claim that “Talos Linux is the best OS for Kubernetes”. It ships with security-focused design: no SSH daemon, no package manager, no operator shell, a read-only root filesystem, and a gRPC-only management plane. Unfortunately all of that hardening operates at the OS level — and copy.fail operates below it, at the kernel. The Talos security team documented the vector in advisory GHSA-m38g-vww2-mvgx and patched it in v1.12.7 and v1.13.0, which ship the updated Linux kernel.

How the attack works in killchain terms: initial access to an unprivileged pod → page cache corruption via copy.fail → hijack code execution in a privileged pod via shared layer → container escape to the host. The escape has four components:

Shared layer with a privileged trigger. kube-proxy runs as root on every Talos node, and its image shares a layer containing /usr/sbin/nft with images built on compatible base distributions. kube-proxy calls nft automatically every few seconds to reconcile nftables rules with the cluster’s Service objects.

The exploit loop. The attacker’s process — running as an unprivileged user with no capabilities — opens an AF_ALG socket, reads the nft binary’s file descriptor, and splices its pages into an AEAD encryption operation. Each call writes four bytes of shellcode into the page-cache image of nft at a chosen offset. After enough iterations to cover the payload, the entire in-memory binary has been replaced. The disk binary is untouched.

Automatic execution. kube-proxy’s next reconciliation tick — within five seconds — causes the kernel to exec what it believes is nft. It is the attacker’s shellcode, running as root inside a privileged pod.

Host filesystem access. kube-proxy runs with privileged: true, which grants CAP_SYS_ADMIN and access to host block devices. The shellcode can mount the host root filesystem and read or modify anything on it — kubeconfig credentials, certificates, Talos configuration, etcd data. Full node compromise.

Architecture: attacker pod and kube-proxy share the same page-cache pages through a common overlayfs layer, giving the attacker write access to memory that kube-proxy will execute.

graph TB

subgraph node["Talos Node"]

subgraph ap["Attacker Pod

unprivileged · no capabilities

Runs copy.fail exploit to replace nft in-memory with shellcode"]

end

subgraph kp["kube-proxy

root · privileged: true · hostNetwork: true

Runs nft on schedule and executes shellcode"]

end

subgraph layer["Shared overlayfs lower layer"]

nft["/usr/sbin/nft · same inode"]

end

subgraph kern["Linux Kernel"]

pc["Page Cache

nft inode · same RAM"]

end

hfs["Host Filesystem

kubeconfig · certs · Talos config

Accessible via block device mount"]

ap ~~~ kp

ap --- layer

kp --- layer

layer --- pc

kp --- hfs

end

Attack sequence: the exploit loop overwrites nft’s page-cache pages 4 bytes at a time; kube-proxy’s next scheduled execution runs the shellcode and gains access to the host filesystem.

sequenceDiagram

participant A as Attacker pod

(unprivileged)

participant OL as overlayfs

lower layer

participant PC as Page Cache

(nft inode)

participant KP as kube-proxy pod

(root · privileged)

participant H as Host Filesystem

A->>OL: open("/usr/sbin/nft", O_RDONLY)

OL-->>A: fd → shared lower-layer inode

loop payload: 4 bytes at offset i

A->>PC: socket(AF_ALG) + splice(fd→pipe→ALG)

Note over PC: authencesn scratch write

payload[i:i+4] → page cache[i:i+4]

end

Note over A: page cache poisoned

disk binary untouched

Note over KP: ~5 seconds later

(scheduled)

KP->>PC: exec("/usr/sbin/nft")

PC-->>KP: serves shellcode

(not real nft binary)

Note over KP: shellcode runs as root

inside privileged pod

KP->>H: mount /dev/{host root} → /mnt/host

KP->>H: read/write host filesystem

(kubeconfig, certs, Talos config)

4. Attack surface in production clusters

The Talos chain requires layer overlap between the attacker’s container and a privileged process. In a production cluster where the attacker finds themselves in a pod they didn’t build, that overlap is not guaranteed — but the surface is larger than it looks.

Shared base images. Most organizations build both application containers and cluster infrastructure (DaemonSets, agents, operators) from a small number of internal base images, often the same one, to standardize patching cadence. That creates the layer overlap this attack needs.

CNI plugins. Cilium, Calico, Flannel, and Weave run as privileged DaemonSets on every node with CAP_NET_ADMIN or CAP_SYS_ADMIN. They ship binaries — eBPF loaders, nftables wrappers, VXLAN tools — that may appear in a shared layer if the attacker’s image is based on a compatible distribution.

Monitoring and logging agents. Prometheus node_exporter, Datadog agents, Falco, Fluent-d — all privileged DaemonSets, all touching the filesystem on a schedule. A monitoring agent that calls a shell or a compression tool from a shared layer on a periodic schedule is structurally identical to the kube-proxy/nft pattern.

There is an irony here: a workload running with no added capabilities and a distroless image is a poor target for this attack. The security agents deployed to watch it are better targets — always present and privileged by definition. The tooling added to monitor for anomalies itself becomes the attack surface.

CSI drivers. AWS, GCP, and Azure CSI storage drivers are privileged DaemonSets that invoke mount, resize2fs, and related tools regularly.

Setuid binaries as deferred triggers. Even without a continuously-running privileged process as a trigger, a setuid binary in a shared layer is exploitable when a pod restart or rolling deployment causes a privileged init container to exec it. Kubernetes restarts pods constantly.

The attacker’s recon. From inside a container, /proc/*/exe symlinks and inode comparisons reveal which binaries are being executed by other processes and whether the attacker shares page-cache pages with them. The recon is cheap and requires no special permissions.

The overlap requirement makes this harder than a pure LPE. In a cluster with strict image provenance — application images and system DaemonSets built from entirely separate supply chains — the overlap may genuinely not exist. But clusters tend toward sprawl, and the surface grows with every additional agent and plugin.

I’m curious which of these vectors the xint.io team will cover in their part 2 — or whether they’ll use a completely different approach.

5. The shared kernel problem and what to do about it

The container boundary is a namespace boundary, not a kernel boundary. Every piece of OS hardening — Talos’s read-only root, absent shell, gRPC-only management — operates above the kernel. copy.fail operates at the kernel. This is not specific to Talos: any shared-kernel container OS is subject to the same class of attack. Patching copy.fail addresses this specific CVE; the architectural question is what prevents the next one.

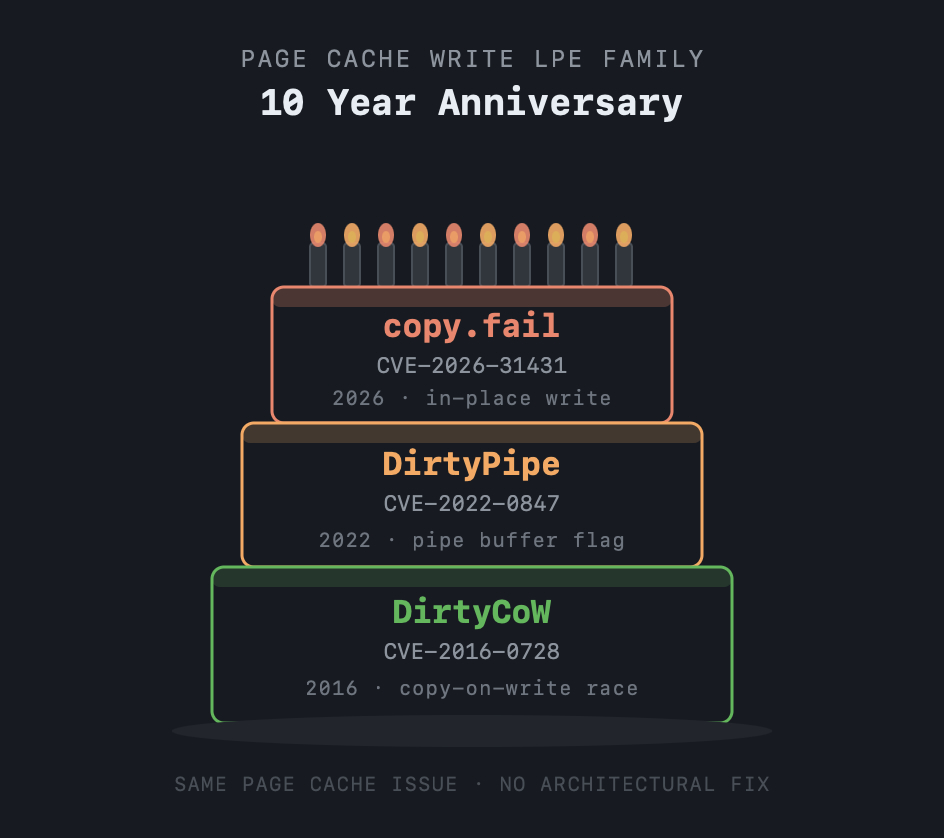

This vulnerability class has a ten-year track record. DirtyCow (CVE-2016-0728) exploited a race condition in copy-on-write handling to corrupt page cache pages and write to read-only files — container escapes followed. DirtyPipe (CVE-2022-0847) exploited a pipe buffer flag bug to achieve the same page cache write primitive; Replit documented how modifications were immediately visible across all containers on the same host, even with unprivileged containers and hardening in place. copy.fail (2026) uses the AEAD in-place optimization. Three separate bugs, a decade apart, all exploiting the same architectural property: the page cache has no tenant boundary. Each time, the kernel was patched. No structural change followed. So what solutions emerged to actually address this class of vulnerability?

Sandboxed runtimes (gVisor). gVisor’s runsc runtime intercepts all syscalls in a userspace kernel called the Sentry, which implements the Linux syscall surface without delegating to the host kernel. An AF_ALG socket call from inside a gVisor container is handled entirely by the Sentry — the host kernel’s algif_aead.c is never invoked. The copy.fail primitive does not exist from inside a gVisor container. gVisor is used in production at Google and is available as a node pool option in GKE.

MicroVMs (Firecracker). Firecracker runs each workload inside a lightweight virtual machine with its own kernel — typically adding only ~125ms to cold start with negligible steady-state overhead. The page cache is per-VM; it cannot cross VM boundaries. A copy.fail exploit in one VM writes into that VM’s private kernel memory and goes no further. The host kernel and all other workloads are unaffected. Firecracker is the runtime behind AWS Lambda and Fargate.

Both approaches trade some performance or compatibility for a structural guarantee that kernel CVEs in one workload cannot reach other workloads — something no amount of OS hardening on a shared-kernel architecture can provide.

| Container | gVisor | Firecracker | |

|---|---|---|---|

| Kernel shared | Yes | No | No |

| copy.fail reachable | Yes | No | No |

| Boundary type | Software (seccomp) | Software (Sentry) | Hardware (KVM) |

| Host attack surface | Large | ~50 syscalls | KVM + minimal VMM |

| Guest kernel | Shared host | Sentry (userspace) | Vendor-built, stripped |

| Memory overhead | ~MB | ~15 MB | ~125 MB |

| Syscall compat | Full | Partial | Full |

References

- copy.fail vulnerability: copy.fail

- xint.io LPE writeup (part 1): xint.io/blog/copy-fail-linux-distributions

- Talos security advisory: GHSA-m38g-vww2-mvgx

- DirtyPipe cross-container contamination by Replit: blog.replit.com/dirtypipe-kernel-vulnerability

- DirtyCow (CVE-2016-0728): dirtycow.ninja

- gVisor sandboxed runtime: gvisor.dev

- Firecracker microVM: firecracker-microvm.github.io